The traditional gap between a creative concept and a live ad campaign has historically been filled with expensive friction. For product teams and performance marketers, the cost of producing a “test” video often rivals the cost of the actual campaign. You commission a script, hire an editor, source stock footage or a studio, and wait two weeks for a 15-second spot that might result in a sub-par click-through rate. This overhead forces teams to be conservative, often sticking to static images or safe, repetitive formats.

The emergence of the motion prototype changes the math of creative experimentation. By using an AI Video Generator, teams are now shifting their validation phase much earlier in the production cycle. Instead of guessing which visual hook will stop the scroll, they are shipping high-fidelity motion tests to small audiences within hours. This isn’t about replacing the final high-production commercial; it’s about ensuring that when you finally spend the big budget, you are spending it on a concept that has already been proven to convert.

The Shift from Static to Motion Testing

In performance marketing, the “static image era” is hitting a wall. Platforms like Meta and TikTok increasingly prioritize movement, but the production bottleneck remains. Most teams attempt to bridge this by animating static layers—the “Ken Burns” effect on a product shot—but these rarely capture the visceral attention required in a high-density feed.

The motion prototype is different. It uses generative models to create original cinematic or lifestyle footage that didn’t exist an hour ago. If a product team is launching a new outdoor gear line, they don’t need to wait for a mountain shoot to see if a specific lighting mood or “rugged” aesthetic resonates with their audience. They can generate ten variations of a hiker in different environments using an AI Video Generator and run a split test. The goal is to find the visual language that triggers engagement before the first real camera is even unboxed.

Speed as a Competitive Advantage

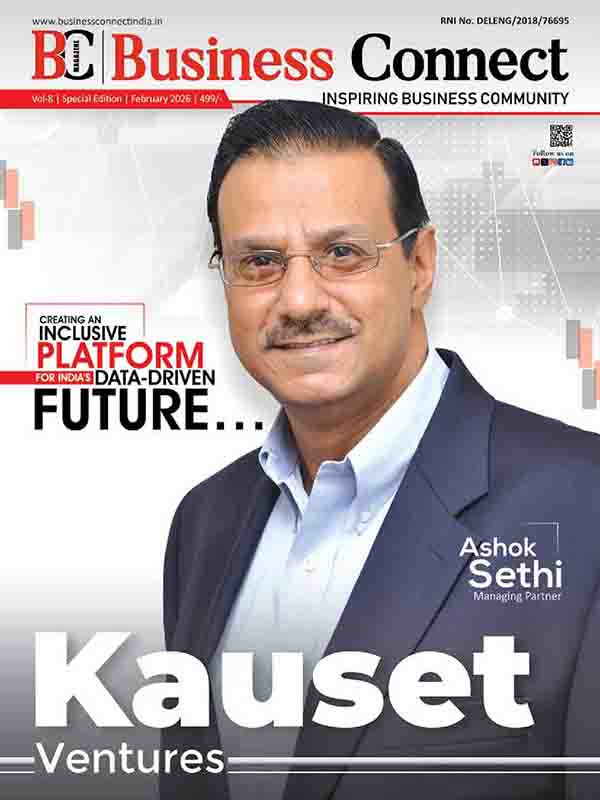

Marketing is increasingly a game of speed-to-market. When a trend hits or a competitor launches a new feature, waiting two weeks for a creative response is equivalent to sitting the round out. Creative teams are now using tools like MakeShot to consolidate their workflow, accessing multiple high-end models like Kling, Sora, and Veo in a single interface. This allows for a “fail fast” mentality where five different creative directions can be visualized by lunchtime.

The advantage here isn’t just speed; it’s the removal of the psychological “sunk cost” of creative work. When a team spends $10,000 on a video, they are emotionally and financially committed to making it work, even if the data suggests it’s a dud. When a prototype costs pennies and takes ten minutes to generate, walking away from a bad idea becomes easy.

The Workflow: From Prompt to Ad Creative

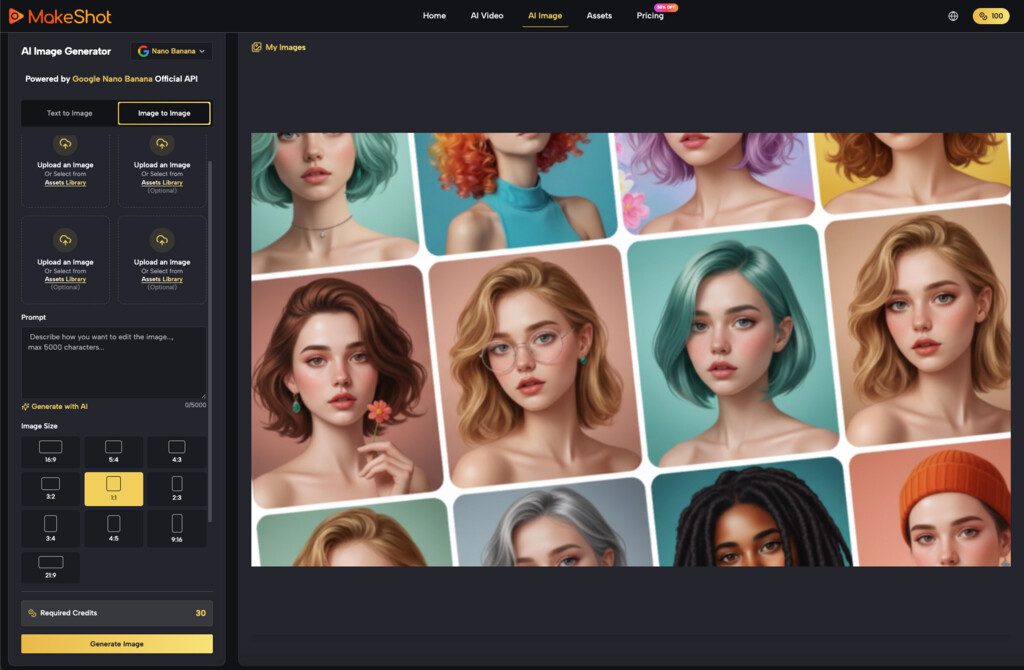

Effective AI video production doesn’t happen in a vacuum. It requires a structured pipeline that balances the randomness of generative AI with the specific requirements of a brand. Most professional workflows begin with the image layer. While text-to-video is impressive, starting with a high-fidelity image provides a “style anchor” that ensures consistency across multiple clips.

A marketer might start by generating a series of product-in-use shots. These aren’t generic stock photos; they are highly specific to the brand’s color palette and target demographic. Once the static “hero” shot is perfected, it is fed into an AI Video Generator to add specific motion. Whether it’s a smooth camera pan, a dramatic slow-motion splash of water, or a character interaction, the image-to-video process preserves the visual identity while adding the necessary kinetic energy.

Iterative Hook Testing

The first three seconds of a video ad—the hook—determine the ROI of the entire campaign. Marketers are now using AI to iterate exclusively on these three seconds. By changing only the motion or the background environment while keeping the product front and center, they can isolate variables.

For instance, does a “lo-fi” grainy aesthetic outperform a “hyper-realistic” 8K render for a Gen Z audience? In the past, testing this would require two separate shoots. Today, it requires two different prompts. By leveraging the AI Video Generator to swap styles instantly, teams can gather hard data on aesthetic preferences before moving into full production.

Navigating Technical Constraints and Uncertainty

It is a mistake to view current generative video tools as a “magic button” that produces final, polished assets 100% of the time. There is a visible gap between what is possible and what is consistently repeatable. For example, temporal consistency—the ability of an AI to keep a character’s face or a product’s logo identical across multiple frames—remains a significant hurdle.

If you are trying to generate a specific, trademarked logo on a moving shirt, the AI will likely distort it during complex movements. This is a moment of necessary caution: AI video is currently best suited for mood, atmosphere, and “lifestyle” shots rather than precise, literal representations of complex mechanical products. Expecting the AI to perfectly simulate the physics of a specific proprietary hinge or a complex liquid pour will often result in “hallucinations” that break the viewer’s immersion.

The Physics Problem

Another area of uncertainty lies in complex human-object interactions. While models like Sora and Kling have made massive strides in understanding physics, they still occasionally struggle with gravity and weight. A character might pick up a cup that has no mass, or their hand might merge with the object. Marketers must exercise practical judgment here; if the goal is a close-up of a human hand interacting with a product, traditional videography is often still the faster path to a professional result. The AI is better utilized for the “impossible” shots—the cinematic sweeps, the stylized environments, and the abstract motion that would otherwise require a massive CGI budget.

Integrating AI into the Creative Pipeline

The most successful teams don’t use AI as a standalone solution. They integrate it into a broader “hybrid” pipeline. The raw output from a platform like MakeShot serves as the raw material. These clips are then brought into traditional editing suites like Premiere Pro or DaVinci Resolve.

In this workflow, the AI handles the “heavy lifting” of visual creation, while the human editor handles the pacing, color grading, and typography. This ensures the final ad feels like a professional brand asset rather than a “cheap” AI experiment. This hybrid approach also helps mitigate the “AI feel” that can sometimes distract viewers. By overlaying real text, professional voiceovers, and high-quality sound design, the generative origin of the footage becomes a background detail rather than the main focus.

Practical Evaluation: What to Look For

When evaluating whether a motion prototype is successful, teams should look beyond “does this look cool?” The evaluation should be grounded in specific campaign goals.

- Stop-Rate: Does the motion effectively halt the user’s scroll in a simulated feed environment?

- Brand Alignment: Even if the motion is fluid, does the lighting and texture match the brand’s existing style guide?

- Clarity of Offer: Does the generative background distract from the product, or does it enhance the value proposition?

The “no-nonsense” reality is that a visually stunning AI video that confuses the customer about what is being sold is a failure. Tool-savvy marketers use AI to simplify the visual message, not to clutter it with unnecessary complexity.

The Future of “Always-On” Creative

The traditional model of “big bang” creative launches—where a brand drops one major video every quarter—is being replaced by an “always-on” iteration cycle. Because the cost of generating new motion assets is now so low, there is no reason to run the same ad for months until it fatigues.

Instead, teams are moving toward a model of constant refreshment. If the data shows that engagement is dipping on Tuesday, a new set of motion variations can be generated and deployed by Wednesday. This level of agility was previously reserved for text-based search ads. Bringing this same level of granularity to video creative is the real power of the AI Video Generator.

Expectation Management for Launch Assets

While the speed of these tools is transformative, it is important to reset expectations regarding “one-click” perfection. A common pitfall for product teams is assuming that the AI will understand the nuances of a brand’s “vibe” without significant steering. Prompting is a skill of refinement, not just dictation.

You should expect to generate 20 to 30 variations to find the one “hero” clip that truly works. This is still orders of magnitude faster than a physical shoot, but it requires a different kind of labor—the labor of curation and critical selection. The human role shifts from “maker” to “editor and director.” If you aren’t prepared to sift through the “uncanny valley” results to find the gems, the process can feel frustrating.

Ultimately, the motion prototype is about reducing risk. By using generative video to validate ideas early, marketing and product teams can stop guessing and start shipping. The technology is no longer a futuristic novelty; it is a tactical tool for teams that need to iterate faster than the competition. Whether you are testing a new product hook or refreshing a tired ad account, the ability to turn a static concept into a moving reality in minutes is a fundamental shift in how creative work gets done.